ChatGPT Is Revolutionary — But It Calculates One Word At A Time

Receiving a concise answer to a web search is vastly preferable to the search engine returning a long list of search results. ChatGPT provides a glimpse of what the search engine of the future could look like. Based on extensive use of the platform over the past few months I must say that this new way of searching saves me a ton of time on a daily basis. Only in a few cases will I revert back to using Google Search and every time I do, I wish that I did not have to weed through a ton of search results to find what I am looking for. But there are downsides to the ChatGPT approach of searching the web.

ChatGPT Does Not Understand The World

Large language models such as ChatGPT respond to us humans as if they actually understood our questions or requests in a similar way our human brains do. They come up with intuitive sounding responses, instead of a long list of partially sponsored, partially otherwise tweaked, Google search results. These human-like answers can give us humans the impression that ChatGPT actually understands the topic and context of our question and comes up with an intuitive, well informed, and balanced answer. However, the reality is very different and users of ChatGPT and any other large language model must be aware of how these human-like answers are generated. Here is ChatGPT’s answer to this question.

Why are your answers so human-like? Do you understand our world?

As an AI language model, I have been trained on a large dataset of human language and have learned to generate human-like responses based on that training. However, I do not have a true understanding of the world like humans do. I do not have emotions or experiences, and I do not possess consciousness or self-awareness. I simply analyze patterns in language and generate responses based on those patterns.

Source: ChatGPT

It is critical for users of ChatGPT to keep this in mind, as there is a big difference between pattern matching and the way our human brain processes information.

ChatGPT Does Not Understand Language

When OpenAI says that ChatGPT understands the meaning of the human language, the definition of ‘meaning’ is much different for ChatGPT than it is for us humans. You can think of it like this: ChatGPT lives in a world defined by hundreds of millions of training documents from a multitude of different sources (websites, public databases, books, journals, archive data, etc.). The training happens in an unsupervised manner where ChatGPT exclusively learns by analyzing text patterns, without receiving any explanations of ‘how things work.’ Take a look at how ChatGPT explains its unsupervised learning process:

How can you figure out the meaning of the English language without a human explaining it to you?

As an AI language model, I have been trained on a large dataset of text, including books, articles, and other written materials, which has allowed me to learn the patterns and structures of the English language. This training allows me to identify the meanings of words and phrases based on how they have been used in context in the dataset.

When presented with a new sentence, I analyze it based on the patterns and structures I have learned from the training data, which allows me to make predictions about the meaning of the words and phrases in the sentence. These predictions are based on statistical models that have been built using the patterns and relationships between words and phrases in the training data.

While I don’t have a true understanding of the world, I can use this knowledge to generate human-like responses to questions and conversations.

Source: ChatGPT

A Different Definition Of ‘Meaning’

It is important to understand that ChatGPT’s processes language in a fundamentally different manner compared to us humans. While we have a rich understanding of the world and can use context, background knowledge, and common sense to interpret language, ChatGPT relies entirely on the analysis of statistical patterns in the text it was trained on. Large language models do not have any real-world knowledge, nor do they understand the concepts they are talking about. All they do is pattern matching based on their internal neural network that was trained by processing millions of documents. Therefore, ChatGPT can only generate accurate answers when there is enough example data available to calculate these answers in a purely mathematical manner. Intuition or ‘common sense’ are not available to large language models. We need to remember that this can lead to outrageous mistakes based on the absence of human-type ‘sanity checks.’

Meaning As A Statistical Challenge

ChatGPT treats ‘meaning’ as a math problem where the model creates sentences based on the probability of a word occurring in a similar sentence and within a similar context. However, ChatGPT treats words as number sequences that it then compares to other number sequences within a similar context. This context, of course, also consists of numbers. Now it becomes very clear why millions of documents are required to allow ChatGPT to basically translate the entire world into an unimaginably large map of numbers. But what does that mean for ChatGPT’s understanding of our world?

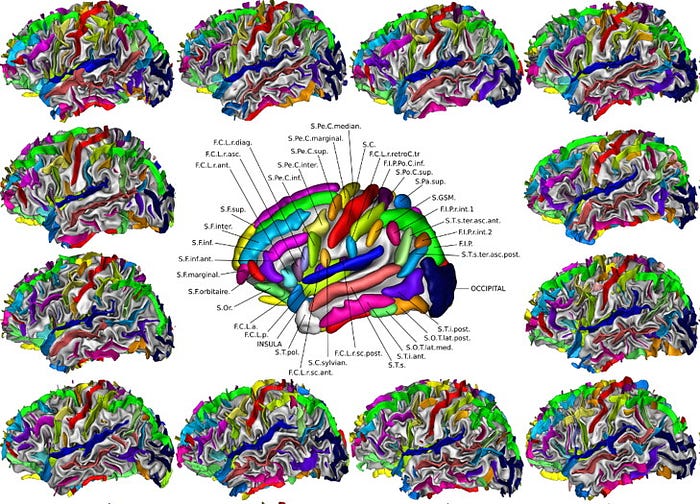

The chart shows how a neural network captures the essence of text by reducing its dimensionality. While the left part shows high complexity with many different dimensions of meaning, the right part is reduced to only three dimensions (Source: https://www.sciencedirect.com/topics/computer-science/multidimensional-space)

Our World In Numbers

Translating our world into massive sequences of numbers means that large language models are unable to understand concepts that they cannot directly calculate from training data. This leads to human-like answers when there is sufficient training data and to complete ‘strikeouts’ when there is none. ChatGPT never experienced what it is like to visit a park, play football, or drink water. Instead, language models receive this information second hand via text data from many different sources. They translate training data describing these experiences into sequences of numbers. They then calculate the probability of certain terms occurring next within the answer to a specific user question. There are two fundamental differences between this approach and our human approach:

ChatGPT Cannot Generalize Knowledge

The ability to apply knowledge across fundamentally different context scenarios sets us humans apart from large language models like ChatGPT. This is due to our brain’s ability to recognize and generalize underlying concepts between different domains. ChatGPT can only transfer knowledge from one domain to another when there is a sufficient number of examples that overlap in their terminology, without containing domain-specific vocabulary. In other words, there needs to be enough data for ChatGPT to calculate probabilities. Large language models are not able to ‘fill in the gaps’ by applying lessons from a different domain.

The Risk of Bias

If we treat the understanding of text as a purely mathematical problem of pattern recognition, it becomes clear that to prevent bias we need to mainly rely on humans curating input data. However, this is not feasible for large language models such as GPT. Therefore, GPT ingests more examples for popular answers than it does for other less popular but maybe equally valid responses. Therefore, ChatGPT is much more likely to come up with mainstream responses. This can end in a dangerous spiral, when humans leverage the answers from ChatGPT to create more documents that are ultimately fed back into ChatGPT’s training data set. In this case, ChatGPT would continuously drink its own Cool Aide leading to increasingly narrow results. Keep in mind that it is the job of data scientists to configure the learning model in a way that prevents these spirals from occurring. For example, ChatGPT clearly has safeguards built in that balance out answers by explicitly mentioning pros and cons, even when you only asked for pros. ChatGPT is also aware of contradicting answers to the same question and often presents both the user with both sides of the story.

Here is ChatGPT’s pretty nuanced take on bias:

Bias can be a problem for any machine learning model, including ChatGPT. As a language model that has been trained on massive amounts of text data, ChatGPT may reflect biases present in the data it was trained on.

For example, if the training data contains biased language or perspectives related to gender, race, or other social factors, the model may learn and replicate those biases in its language generation or response selection. Additionally, if the training data is not representative of the diversity of human experiences and perspectives, the model may have difficulty generating responses that are inclusive and respectful to all users.

To address these issues, there have been efforts to develop methods for detecting and mitigating bias in language models like ChatGPT. One approach is to use a diverse range of training data that represents a variety of perspectives and experiences. Another approach is to develop techniques for debiasing language models, such as modifying the training data or fine-tuning the model to reduce or eliminate biased language patterns.

However, it is important to note that bias is a complex issue that cannot be fully eliminated from machine learning models. Developers and researchers need to be aware of the potential for bias in their models and work to minimize it as much as possible, while also recognizing that bias is a broader societal issue that requires ongoing efforts beyond the scope of machine learning.

Source: ChatGPT, March 8, 2023

Final Thoughts

All of the above in no way negates the massive upsides of large language models. As users of these models we must take any of its answers as what they really are: the result of an unimaginably complex set of mathematical calculations that match data from ‘the entire internet’ to the question we have just asked and then comes up with a sequence of words that had the highest probability of fitting to our question.

Finally, we should remember that Google’s search results also are created in an opaque manner that has triggered many lawsuits accusing the company of boosting the search ranking of its own products or based on the ad spend of its customers and partners. Just ask Yelp. Therefore, whatever search platform we use, we need to always question and crosscheck results, which indeed is a hard thing to do.